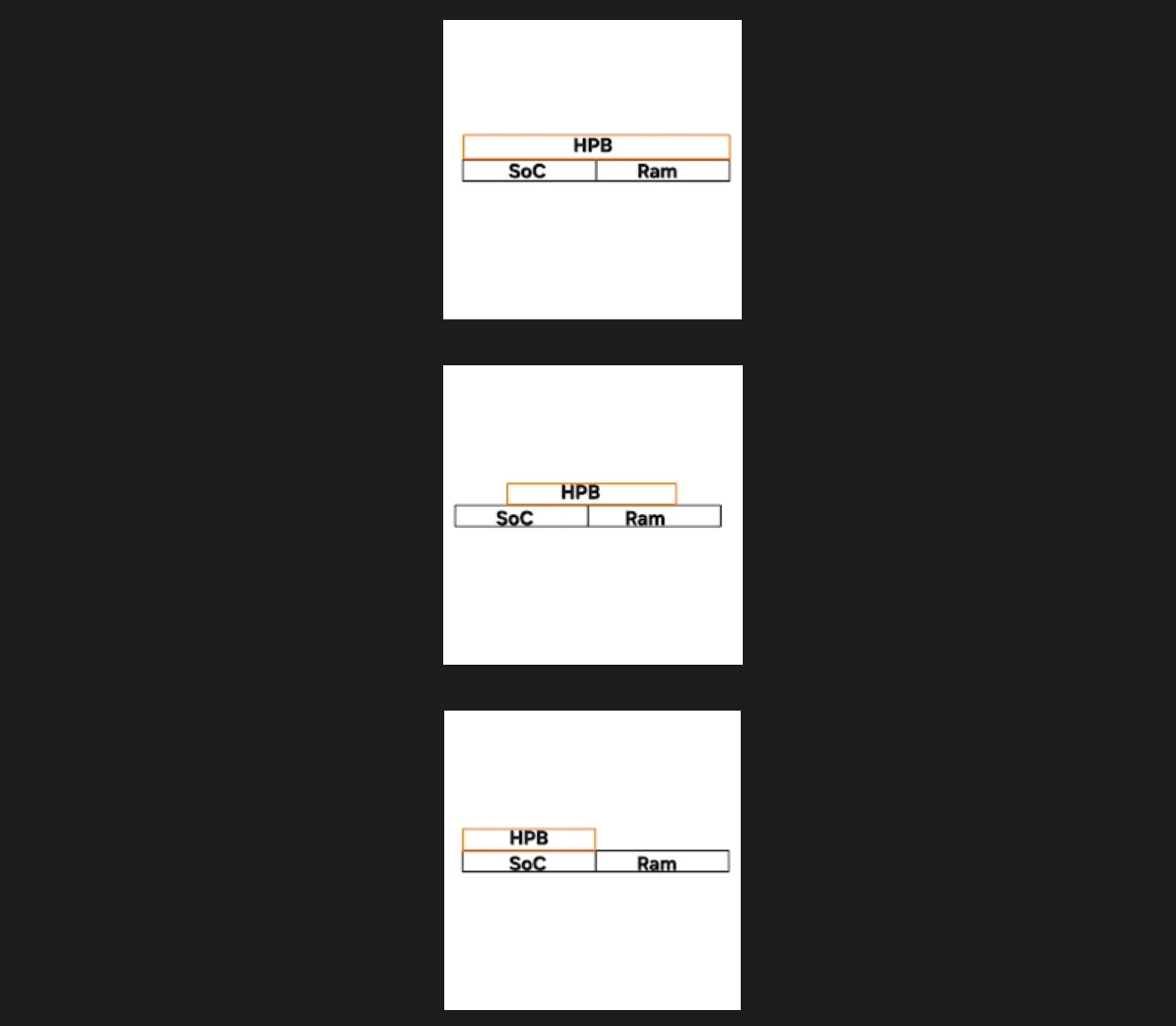

Samsung is reportedly developing a new memory approach aimed at bringing higher-performance on-device AI phones closer to reality. According to a recent ETNews report, the company is working on a mobile HBM-style design built around a packaging method called Multi Stacked FOWLP.

The idea is to adapt high-bandwidth memory concepts for mobile devices such as smartphones and tablets, where space, heat, and power limits are far tighter than they are in servers. That makes a direct copy of traditional HBM impractical, so Samsung is said to be pursuing a more compact structure tailored for handheld hardware.

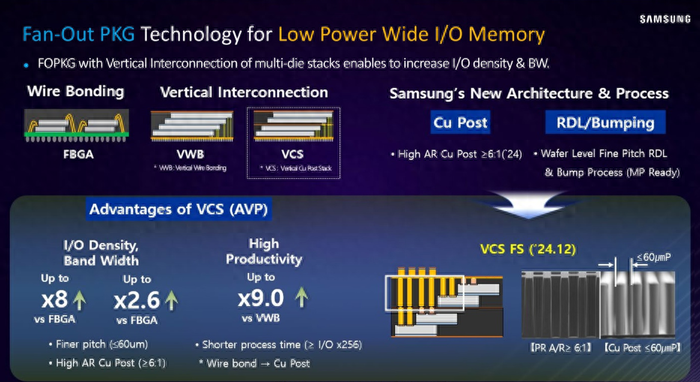

The report says the company plans to build on an improved VCS, or vertical copper stack, approach. In today’s mainstream LPDDR memory packages, wire bonding still limits the number of I/O connections, increases signal loss, and leaves less room for bandwidth and thermal gains. Samsung’s proposed direction is to use much taller and denser copper pillars so more interconnects can fit into the same footprint.

More specifically, the copper pillar aspect ratio is said to move from roughly 3:1 to 5:1 up to around 15:1 to 20:1. That could let the package carry more data at once, which is exactly the kind of improvement heavier local AI workloads would benefit from.

There is a catch, though. Once those copper pillars get thinner than 10 micrometers, they become easier to bend or break. To solve that, Samsung is reportedly using Multi Stacked FOWLP, extending wiring outward after molding so the fine copper structures get additional support.

If the design works as intended, the report claims memory bandwidth could rise by about 15% to 30%. It could also allow more I/O terminals to fit into the same area, which would improve capacity potential and make the package more capable under tight mobile constraints.

In practical terms, that could help future phones or XR gear handle larger local AI models with less memory bottlenecking. Still, the original report didn’t provide benchmark conditions, so those gains should be viewed as technical targets rather than guaranteed real-world results.

For now, the technology is still in development, and there’s no confirmed production timeline. Industry observers quoted in the report believe it could eventually show up in a later Exynos 2800 revision or perhaps in Exynos 2900, but that part remains speculative.